OpenSearch

One platform. Full visibility.

Faster insights.

Unify logs, metrics, traces, APM, and dashboards in a single, OpenTelemetry-native platform built for modern applications and AI agents. Eliminate fragmented tools and gain end-to-end visibility across your entire stack—from infrastructure, to microservices, to distributed systems, to AI agents.

Quick start with OpenSearch Observability Stack

With the OpenSearch Observability Stack you can deploy in minutes a pre-configured, full-stack solution that bundles OpenTelemetry Collector, Prometheus, OpenSearch Data Prepper, and OpenSearch Dashboards. Own your data completely—no vendor lock-in, no licensing surprises.

Sign up here for early notice of upcoming Observability webinars hosted by the OpenSearch Software Foundation and its members.

Observability for every layer of your stack

OpenSearch observability spans interconnected capability areas — each purpose-built for a different layer of your stack, but designed to work together as a unified experience.

Distributed tracing and APM

See inside your applications with distributed tracing, service maps, latency breakdowns, and error tracking across microservices. OpenTelemetry-native instrumentation with automatic RED metrics (Rate, Errors, Duration) computed from your trace data. Correlate traces with logs in a single click to move from “this request was slow” to “here’s the error log that explains why.”

AI Agent observability

Trace agent reasoning chains, visualize tool-call sequences and decision flows, benchmark correctness with LLM-as-judge evaluation, and compare agent performance across models and configurations. Built on OpenTelemetry GenAI semantic conventions.

Log analytics

Search, correlate, and alert on your logs. Full-text search with PPL, structured log ingestion via Data Prepper and Fluent Bit, and log-to-trace correlation through shared context (trace ID, span ID). OTel-native log collection via the OTLP protocol.

Metrics and Prometheus

Time-series metrics with native PromQL support. Data Prepper automatically computes RED metrics from trace data, and the Observability Stack supports Prometheus remote-write for infrastructure metrics. Query with PromQL, build Grafana dashboards, or use OpenSearch Dashboards panels.

OpenTelemetry

Use OpenTelemetry standard instrumentation across all your workflows. OpenSearch observability is built on OTEL from the ground up — all data ingestion uses OTel protocols and the same semantic conventions, making your existing instrumentation portable across any OTel-compatible backend.

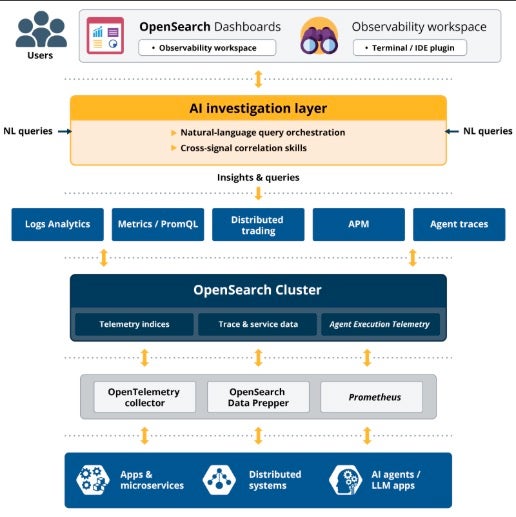

Observability architecture in OpenSearch

OpenSearch observability is powered by a unified platform architecture — a core search and analytics engine, a pipeline processor for ingestion, and a visualization layer — working together to collect, store, analyze, and act on logs, metrics, and traces.

Anomaly detection, log analytics, trace correlation, and alerting all run as plugins inside a single distributed engine, sharing the same indexes, the same security model, and the same query language. The architecture diagram below shows how this works: a small number of components that combine to cover use cases that typically require three or four separate tools.

OpenTelemetry Collector Docs →

A vendor-neutral telemetry pipeline that collects, processes, and exports logs, metrics, and traces from applications and infrastructure.

OpenSearch Data Prepper Docs →

A vendor-neutral telemetry pipeline that collects, processes, and exports logs, metrics, and traces from applications and infrastructure.

Prometheus Docs →

A metrics collection and monitoring system that OpenSearch integrates with to provide time-series observability data — CPU, memory, RPS, error rates, latency.

OpenSearch Core Platform →

The scalable data platform and analytics engine. Stores, indexes, and queries logs, metrics, traces, and AI telemetry — the single source of truth for observability.

AI Investigation Layer ML docs →

The intelligent control plane. Uses AI to interpret user intent, orchestrate queries across data sources, correlate signals, and automatically identify root causes.

OpenSearch Dashboards Dashboards →

The visualization layer. Interactive dashboards, observability views (logs, metrics, traces, APM), and increasingly natural-language-driven investigation.

PPL PPL docs →

A query language designed for log and event analysis. Chain commands (like pipes) to filter, transform, and analyze data efficiently across all observability data.

Why OpenSearch?

The truly open-source

observability suite

OpenSearch is a distributed, community-driven, fully open-source search and analytics suite. Built-in security, scalable capacity and performance, and support for high availability help make OpenSearch a solid foundation for enterprise-grade applications across search, observability, security analytics, and more. You can run OpenSearch on premises or in hybrid or multicloud environments and put its broad and deep feature set to work as you see fit—with no licensing fees.

Self-host anywhere without vendor lock-in

OpenSearch is Apache 2.0 licensed and governed by the Linux Foundation. Build without fear of feature gating or risk of relicensing.

Unified platform instead of fragmented tools

Built on OpenTelemetry from the ground up. All data ingestion uses OTel protocols and the same semantic conventions. Your existing instrumentation is portable across any OTel-compatible backend.

OpenTelemetry-native

Use OpenTelemetry standard instrumentation across all your workflows. OpenSearch observability is built on OTEL from the ground up — all data ingestion uses OTel protocols and the same semantic conventions, making your existing instrumentation portable across any OTel-compatible backend.

GenAI-first

Purpose-built views for AI agent tracing using standard GenAI semantic conventions. GenAI trace visualizations. LLM-as-judge evaluation. No other open-source observability platform offers these capabilities natively.

Predictable costs

Self-hosted with predictable costs. No per-host, per-GB, or per-seat cost surprises.

Fast time to value

Ability to deploy observability with minimal setup — minutes instead of weeks to realize value.

OpenSearch platform capabilities for observability

OpenSearch provides core platform capabilities that underpin every observability workflow. These tools work across all observability use cases — from traditional infrastructure monitoring to AI agent evaluation.

Query with PPL

Piped Processing Language (PPL) is an intuitive query language for observability workflows. You can use it to filter, transform, aggregate, and visualize telemetry data.

PPL is the OpenSearch platform standard query language and its used across all OpenSearch solution areas.

source = ss4o_logs-*

| where severity = "ERROR"

| stats count() by service.name

| sort -

count()

Transform data with Data Prepper | Visualize with OpenSearch Dashboards | Control plane for observability

The AI Investigation Layer is a new capability for the OpenSearch platform. It acts as a control plane for observability that transforms observability from manual analysis to AI-driven investigation. Instead of manually querying data:

How it transforms your workflow: 01 Users ask a question in natural language 02 The system generates queries automatically 03 Correlates signals across logs, metrics, and traces 04 Surfaces root causes automatically

Natural language to root cause in seconds

The AI investigation layer uses natural language query orchestration and cross-signal correlation skills to interpret your intent, correlate signals across logs, metrics, and traces, and surface root causes automatically.

// Natural language query example: // "Why is checkout service slow for EU users?" // AI layer orchestrates: // 1. Query trace data for EU region, checkout service // 2. Correlate with error logs matching trace IDs // 3. Pull CPU/memory metrics for affected pods // 4. Surface anomaly: DB connection pool exhausted // at 14:32 UTC → cascading latency spike // Result: root cause identified in < 30 seconds

One interface for all your observability data

OpenSearch Dashboards provides interactive visualizations, the Observability workspace (traces, services, notebooks), APM views, and increasingly natural-language-driven investigation via the AI investigation layer.

// Dashboards Observability workspace // Surfaces: Trace Analytics · Service Map · Log Explorer // Metrics · Alerts · Reports · Notebooks // Connect Prometheus for infrastructure metrics: GET /_plugins/_prometheus/api/v1/query?query= rate(http_requests_total[5m]) // PPL log query in Discover: source = otel-v1-apm-span* | where traceId = "abc123" | fields spanId, name, durationInNanos

Observability in Action

| When you need to… | Use | |

|---|---|---|

| Debug a slow microservice | APM and distributed tracing | APM docs → |

| Monitor AI agent behavior | AI agent observability | Agent Health → |

| Centralize application logs | Log analytics | Log ingestion docs → |

| Track infrastructure metrics | Metrics and Prometheus | Metrics docs → |

| Build a custom dashboard | OpenSearch Dashboards | Dashboards docs → |

| Set up anomaly-based alerts | Alerting plugin | Alerting docs → |

| Query across clusters | Cross-cluster search | CCS docs → |

| Deploy from Kubernetes | K8s Operator | K8s Operator docs → |

Are you ready to go zero to full-stack in minutes?

With the OpenSearch Observability Stack you can deploy in minutes a pre-configured, full-stack solution that bundles OpenTelemetry Collector, Prometheus, OpenSearch Data Prepper, and OpenSearch Dashboards. Own your data completely — no vendor lock-in, no licensing surprises.

QUICK START

Docker, Kubernetes, or bare metal. Full stack in 5 minutes.

$

curl -fsSL https://raw.githubusercontent.com/opensearch-project/observability-stack/main/install.sh | bash

Choose your integration style

Three paths to production observability. Pick the one that fits your workflow.

GenAI SDK

One-line setup with automatic OpenTelemetry instrumentation. Decorators for agents, tools, and workflows.

example.py

PYTHON

from opensearch_genai_sdk_py import register, agent, tool # One-line setup — configures OTEL pipeline automatically register(service_name="my-app") @tool(name="get_weather") def get_weather(city: str) -> dict: return {"city": city, "temp": 22, "condition": "sunny"} @agent(name="weather_assistant") def assistant(query: str) -> str: data = get_weather("Paris") return f"{data['condition']}, {data['temp']}C" # Automatic OTEL traces, metrics, and logs result = assistant("What's the weather?")

Key benefits

Manual OTEL instrumentation

Full control over your observability. Use standard OTEL APIs directly.

example.py

PYTHON

from opentelemetry import trace from opentelemetry.sdk.trace import TracerProvider from opentelemetry.sdk.trace.export import BatchSpanProcessor from opentelemetry.exporter.otlp.proto.grpc.trace_exporter import OTLPSpanExporter # Configure OTEL with Observability Stack provider = TracerProvider() exporter = OTLPSpanExporter(endpoint="http://localhost:4317") provider.add_span_processor(BatchSpanProcessor(exporter)) trace.set_tracer_provider(provider) # Use standard OTEL APIs tracer = trace.get_tracer(__name__) with tracer.start_as_current_span("agent_task"): response = llm.generate(prompt) span = trace.get_current_span() span.set_attribute("gen_ai.request.model", "gpt-4") span.set_attribute("gen_ai.usage.output_tokens", 150)

Key benefits

Bring your own OTEL setup

Already using OTEL? Just point your exporter to Observability Stack. Keep your existing setup.

example.py

PYTHON

from opentelemetry.sdk.trace.export import BatchSpanProcessor from opentelemetry.exporter.otlp.proto.grpc.trace_exporter import OTLPSpanExporter # Add Observability Stack as an additional exporter # Keep your existing OTEL configuration exporter = OTLPSpanExporter( endpoint="http://localhost:4317" ) # Add to your existing trace provider trace_provider.add_span_processor( BatchSpanProcessor(exporter) ) # Your existing OTEL instrumentation continues to work # Traces now flow to both your existing backend AND Observability Stack Key Benefits Keep your existing OTEL setup Multi-backend support (send to multiple destinations) No code changes required Works with any OTEL collector